SOME KEY PROJECTS

Search for Gamma-Ray Bursts in AstroSat CZTI Data

Working with the Cadmium Zinc Telluride Imager (CZTI) onboard India's AstroSat satellite, I built and maintained an automated pipeline for detecting and characterizing short-duration high-energy transients. The core challenge was scale and speed: the pipeline needed to flag candidate events, retrieve relevant data, and produce analysis-ready outputs with minimal manual intervention. I engineered a monitoring bot for triggered searches that significantly reduced turnaround time for event follow-up. Beyond automation, I overhauled the output file structure, resolved several bugs in the analysis pipeline, and improved overall data reliability. The work also involved evaluating spectral models and their effect on flux sensitivity across different sky regions — a problem with direct parallels to anomaly detection and threshold optimization in data-heavy domains. I contributed 13 circulars to the Gamma-Ray Burst Coordinates Network (GCN), communicating findings to the broader scientific community in a structured, time-sensitive format.

Exploratory Data Analysis on the Netflix Titles Dataset

This project involved end-to-end exploratory analysis of the Netflix Titles dataset using SQL and Python. Starting from raw data, I cleaned and structured the dataset, then used SQL queries — including aggregations, window functions, and CASE logic — to surface patterns in content type, genre distribution, release trends, and regional availability. The goal was to move beyond surface-level observations and identify meaningful trends: how the platform's content mix has shifted over time, which markets are most heavily represented, and how rating distributions vary across content categories. Findings were visualized in Tableau Public to make the insights accessible and actionable. The project demonstrates a full analytics workflow from data wrangling to insight communication.

Study of Solar Flares using XSM Catalog

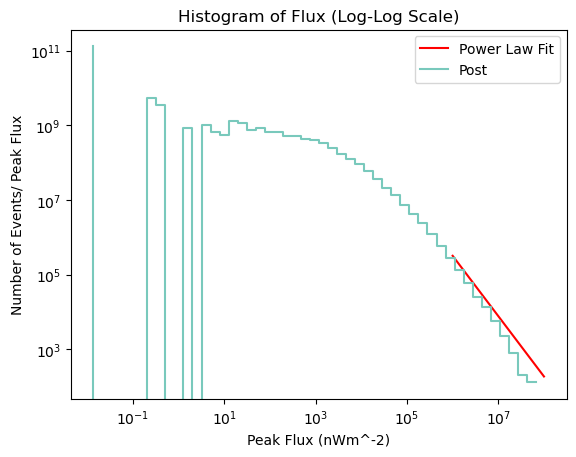

Using X-ray light curve data from the X-ray Spectrometer (XSM) aboard the Chandrayaan-2 orbiter, I conducted a quantitative study of solar flare behavior. The work centered on background-subtracted peak flux analysis and fitting power-law distributions to characterize flare intensity patterns. I applied a Monte Carlo approach to estimate the exponent α of the power-law, adding statistical rigour to what is otherwise a noisy, high-variance dataset. The project involved significant time-series processing, handling irregular cadence data, and making principled choices about background estimation — challenges that translate well to any domain dealing with irregular event data and signal extraction.

Exploring Radiative Processes using Radio Astronomy Data Analysis

This project involved multi-wavelength data analysis across Radio, Infrared, and Visible bands using FITS image data from the CIRADA catalog. I worked with CASA software to process raw telescope data and generate publication-quality visualizations. A key component was applying MCMC methods to extract faint signals buried in noisy data — specifically, recovering the afterglow of the gravitational wave event GW170817 from radio observations. I also investigated fast radio bursts (FRBs) and computed dispersion measure (DM) values to characterize burst properties. The project built strong intuition for uncertainty quantification, model fitting, and the trade-offs involved in working with sparse, high-dimensional observational data.

Preview of the Report

Geometrical Extension of Einstein's General Relativity

This theoretical physics project involved studying the Teleparallel formulation of gravity and developing a geometrical extension using advanced mathematical frameworks. The practical component was fitting the model to observational data: I used low-redshift datasets to constrain the free parameters of the f(R, G) = RnG1−n model via Markov Chain Monte Carlo (MCMC) methods. The core skill here — building a parameterized model, defining a likelihood, and using sampling techniques to explore a multi-dimensional parameter space — is broadly applicable to statistical modelling problems across domains.